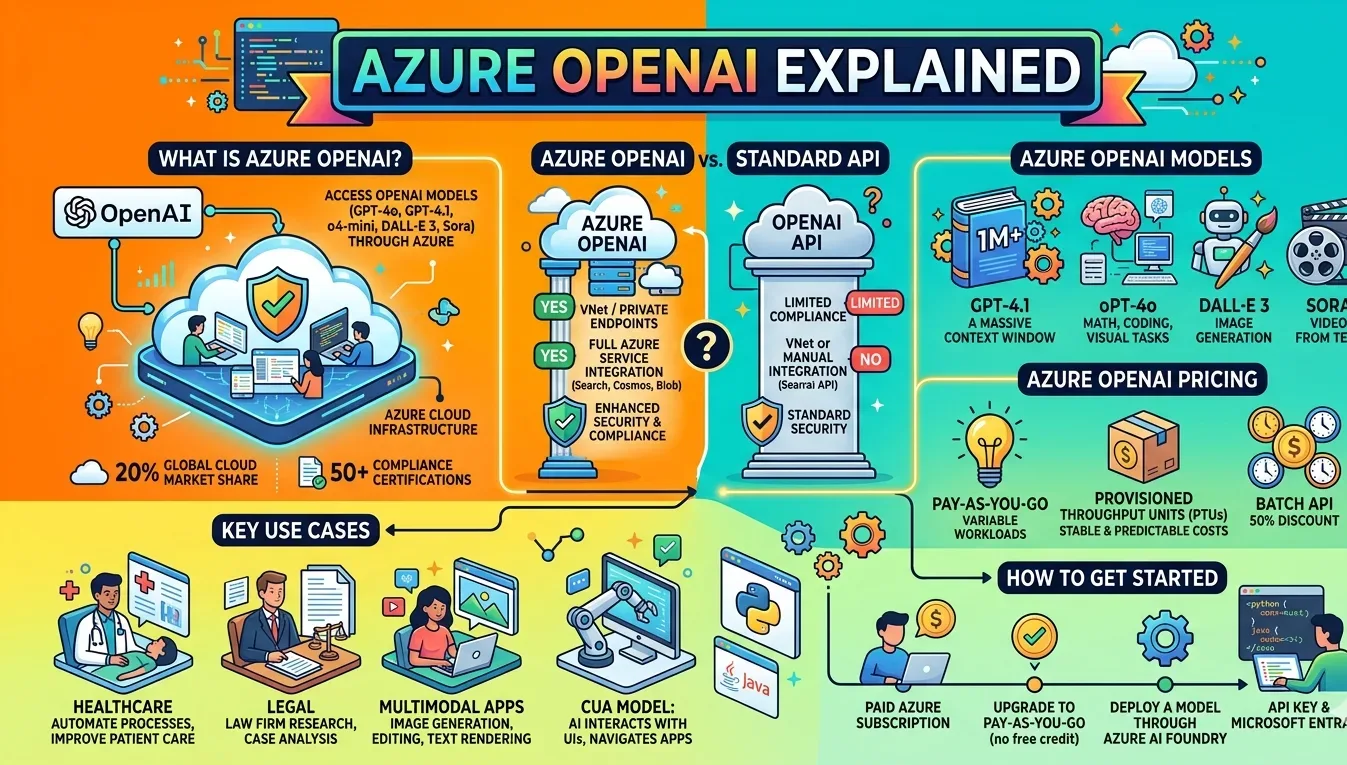

Azure OpenAI is Microsoft’s cloud service that gives developers and enterprises access to OpenAI’s models — including GPT-4o, GPT-4.1, o4-mini, DALL-E 3, and Whisper — through the Azure platform. Rather than calling the OpenAI API directly, users run the same models inside Azure’s infrastructure, with Microsoft’s security and compliance layers built in. Microsoft co-develops the API alongside OpenAI, so the interface stays compatible with the standard OpenAI SDK.

What Is Azure OpenAI Service?

Azure OpenAI Service is a managed API product delivered through Azure AI Foundry. It exposes OpenAI’s large language models within Azure’s cloud environment, allowing teams to connect their data, storage, and other services directly to those models without leaving the platform. Customer data is not used to retrain models, and the service holds over 50 regional compliance certifications, including HIPAA, ISO 27001, and SOC 2.

Microsoft Azure holds 20% of the global cloud infrastructure market, second only to AWS. That existing footprint is a large part of why enterprises prefer to access OpenAI’s models here rather than through a separate vendor relationship.

Azure cloud market share

20%

Compliance certifications

50+

Deployment regions

27

Batch API discount

50%

Azure OpenAI vs the Standard OpenAI API

Both services use identical underlying models. The differences sit in infrastructure, access control, and compliance — not model capability.

| Feature | Azure OpenAI | OpenAI API |

|---|---|---|

| Infrastructure | Microsoft Azure datacenters | OpenAI-managed |

| Compliance (HIPAA, SOC 2, ISO) | Yes | Limited |

| VNet / Private Endpoints | Yes | No |

| Model training on customer data | No | No |

| Azure service integration | Native | Manual |

| Model release timing | Slight delay | Day-of release |

For teams already running workloads in Azure, the native integrations with Cognitive Search, Cosmos DB, Blob Storage, and Azure Machine Learning remove the friction of connecting those systems to an external AI API. Organizations building cloud development workflows around these tools find that consolidation straightforward.

Azure OpenAI Models

Azure OpenAI supports models across text, reasoning, audio, image, and video tasks. The GPT-5 series includes flagship general-purpose models with a 1 million token context window. GPT-4.1 handles advanced reasoning and long-context tasks. o4-mini targets math, coding, and visual tasks with a 200K token context window. DALL-E 3 handles image generation, while Sora generates video from text prompts.

For automated software engineering tasks, OpenAI’s coding agent — built on the o3 architecture and retrained for real-world engineering work — is accessible through Azure environments, enabling code review, bug fixes, and pull request generation at scale.

Context window by model (thousands of tokens)

Azure OpenAI Pricing

Billing runs on a token-based model. A token is roughly three-quarters of a word. The total charge equals input tokens plus output tokens, billed per million tokens consumed. Model pricing varies substantially — GPT-5 runs at $1.25 per million input tokens and $10 per million output tokens, while GPT-3.5 Turbo costs a fraction of a cent per thousand tokens.

Pay-as-you-go (Standard)

Standard pricing charges per token consumed. It suits variable or unpredictable workloads — development, testing, and applications where daily usage fluctuates. No upfront commitment is required. For teams exploring AI platforms on browser-based devices, this tier is typically where they start.

Provisioned Throughput Units (PTUs)

PTUs reserve a fixed amount of model processing capacity, offering predictable performance and stable monthly costs. Provisioned reservations — monthly or annual — reduce spend by up to 70% compared to hourly PTU pricing. Production deployments with consistent, high-volume usage benefit most from this model.

Batch API

The Batch API processes large volumes of non-interactive requests with results returned within 24 hours. It carries a 50% discount versus standard global pricing, making it practical for large-scale inference tasks where real-time response is not required.

Approximate input token pricing by model ($ per million tokens)

Azure OpenAI Use Cases

McKesson uses Azure OpenAI to automate manual processes and improve patient-facing operations. Harvey, a legal technology company, uses it to scale law firm research and case analysis. Sports organizations use Azure AI for generating real-time stats and match summaries. A nonprofit reported using the service to improve surgical demand forecasting and patient care planning globally.

For developers building multimodal applications, the service also powers AI image generation workflows through DALL-E 3 and GPT-image-1, both of which support image editing and instruction-following alongside generation. GPT-image-1 adds accurate text rendering and support for image input — features DALL-E 3 does not include.

The Computer-Using Agent (CUA) model is a newer addition that lets AI interact with graphical interfaces, navigate applications, and run multi-step tasks using natural language instructions. It runs through the Responses API and targets workflow automation.

How to Get Started with Azure OpenAI

Access requires a paid Azure subscription. The $200 free credit available to new Azure accounts cannot be applied to Azure OpenAI services — a pay-as-you-go upgrade is required before deploying any model. The process takes under ten minutes from an existing Azure account.

After upgrading, navigate to Azure AI Services in the portal, select Azure OpenAI, and deploy a model through Azure AI Foundry. Authentication supports both API key and Microsoft Entra ID. For production deployments, Microsoft recommends Entra ID for role-based access control. SDKs are available for Python and Java. The Azure CLI supports scripted deployments. For developers evaluating cloud-native productivity stacks, Azure OpenAI’s integration with Microsoft 365 and existing Azure infrastructure is often the deciding factor.

Growing enterprise cloud adoption has also pushed Azure OpenAI into more standard IT procurement conversations — particularly in healthcare, legal, and financial services, where data residency and compliance certification matter as much as model capability.

FAQs

What is Azure OpenAI used for?

Azure OpenAI is used to build enterprise AI applications including chatbots, document summarization tools, code generation systems, image generators, and voice agents. It runs OpenAI’s models inside Azure’s infrastructure with enterprise security and compliance built in.

Is Azure OpenAI the same as ChatGPT?

No. Azure OpenAI is a developer API that provides access to the same underlying models that power ChatGPT, but through Microsoft Azure’s infrastructure. It has no chat interface — developers build applications on top of it using the REST API or SDK.

How much does Azure OpenAI cost?

Pricing is token-based. GPT-5 starts at $1.25 per million input tokens. GPT-3.5 Turbo is far cheaper. Pay-as-you-go billing and provisioned throughput units are the two main options. A Batch API provides 50% off standard rates for non-time-sensitive tasks.

Does Azure OpenAI store my data?

Microsoft does not use customer prompts or completions to train or improve Azure OpenAI models. Data handling is governed by the Azure data privacy and security framework. Customers retain ownership of their input and output data.

What is the difference between Azure OpenAI and the OpenAI API?

Both access the same models. Azure OpenAI adds Microsoft’s compliance framework (HIPAA, SOC 2, ISO 27001), VNet/Private Endpoint support, and native integration with Azure services. The standard OpenAI API releases new models faster but lacks these enterprise features.