Picture this: You’re in the middle of your peak sales period and your e-commerce platform is humming along. Despite surging customer demand, your Kubernetes infrastructure is seamlessly adapting to handle the increased traffic. That’s because behind the scenes, your GKE cluster autoscaler is intelligently selecting the best compute resources from a range of options you’ve defined. No late-night scrambles for resources, no lost sales due to capacity issues — just a smooth, uninterrupted customer experience.

With the new custom compute class API in Google Kubernetes Engine (GKE), this scenario is not just a vision, it’s a reality. By giving you fine-grained control over your infrastructure choices, GKE can now prioritize and utilize a variety of compute and accelerator options based on your specific needs ensuring that your applications, including AI workloads, always have the resources they need to thrive. This results in improved cost efficiency, enhanced resource availability, and the flexibility to configure your infrastructure exactly how you want it.

What challenges does the compute classes API address?

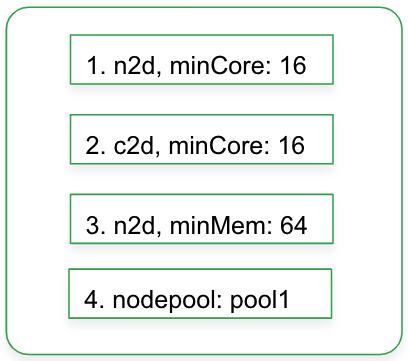

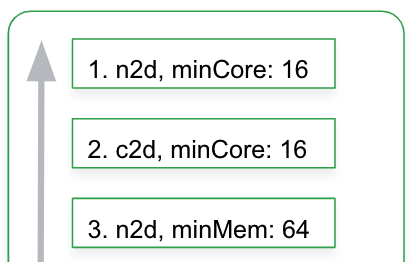

1. GKE custom compute classes maximize obtainability and reliability by providing fall-back compute priorities as a list of candidates’ node characteristics or statically defined node pools. This increases the chances of successful autoscaling while giving you control over the resources that get spun up. If your first priority resource is unable to scale up, GKE will automatically try the second priority of node selection, and then continue to other lower priorities on the list.

Compute classes support accelerators and related attributes, making it ideal for AI use cases. For instance, you can specify GPU or TPU priorities, related storage needs, and node consolidation delays and thresholds.

2. Reconcile to preferential infrastructure. Without custom compute classes, during a scaling event, your top priority nodes may not be available, so your pods land on lower priority instances… and remain there until you manually intervene.

Custom compute classes enable active migration of workloads to preferential node shapes, such as those based on cost or performance optimization. If workloads initially land on lower priority nodes, GKE automatically tries to migrate workloads to higher priority nodes over time, as availability allows.

3. Platform teams need ways to centralize default node attributes for related workloads, including characteristics such as machine families, minimum cores, spot/on-demand, GPU and TPU settings, and autoscaling consolidation parameters so that these settings can be easily consumed by application teams without complicating the developer experience.

Compute classes provide a consistent node configuration API that abstracts away node configuration complexity for developers. App teams can consume compute classes with a simple nodeSelector label or rely on namespace-scoped default compute classes.

Control, flexibility and a streamlined developer experience

Custom compute classes give platform teams increased control and flexibility, while shielding application developers from unnecessary overhead and configuration complexity. In addition to defining fallback compute priorities, the compute class can be used to tune autoscaling attributes like utilization thresholds and consolidation delays. Compute classes currently support both CPUs as well as accelerators with some limitations (see documentation).

Platform teams craft and deploy custom compute classes as Kubernetes Custom Resources (CRs). This Kubernete-native approach means they can be rolled into GitOps and Infrastructure-as-Code (IaC) playbooks. Here’s an example custom compute class:

- code_block

- <ListValue: [StructValue([('code', 'apiVersion: cloud.google.com/v1rnkind: ComputeClassrnmetadata:rn name: my-classrnspec:rn priorities:rn rules:rn – machineFamily: n2rn minCores: 16rn – machineType: e2-standard-16rn – nodepools: [pool1, pool2]rn autoscalingPolicy:rn consolidationDelayMinutes: 20rn nodepoolAutoCreation:rn enabled: true'), ('language', ''), ('caption', <wagtail.rich_text.RichText object at 0x3e6e3089e130>)])]>

In this example, the GKE Cluster Autoscaler first tries to spin up a n2 node with a minimum of 16 vCPU cores. If, for any given reason, such nodes are unavailable, CA tries the e2-standard-16 resource. And if that is unavailable, CA tries to spin up nodes from two previously defined nodepools. To avoid extra node churn, the consolidation delay setting ensures nodes stay around for 20 minutes after they are no longer needed. Node auto-provisioning (NAP) is enabled in this example and creates new node pools automatically as needed.

See the documentation for all configuration options.

Workloads can consume custom compute classes via a nodeSelector label, as follows:

- code_block

- <ListValue: [StructValue([('code', 'apiVersion: apps/v1rnkind: Deploymentrnmetadata:rn name: workloadrnspec:rn replicas: 1rn selector:rn matchLabels:rn app: workloadrn template:rn metadata:rn labels:rn app: workloadrn spec:rn nodeSelector:rn cloud.google.com/compute-class: my-classrn containers:rn – name: testrn image: gcr.io/google_containers/pausern resources:rn requests:rn cpu: 1rn memory: "1Gi"'), ('language', ''), ('caption', <wagtail.rich_text.RichText object at 0x3e6e3089eac0>)])]>

To further streamline the developer experience, you can designate a custom compute class as the default for specific Kubernetes namespaces. When a compute class is set as the namespace default, any workloads deployed within that namespace automatically inherit the compute class configuration, eliminating the need for repetitive workload-level settings. To create a namespace-level default custom compute class, set a namespace label using the cloud.google.com/default-compute-class key, for example:

- code_block

- <ListValue: [StructValue([('code', 'kubectl label namespaces default cloud.google.com/default-compute-class=my-class'), ('language', ''), ('caption', <wagtail.rich_text.RichText object at 0x3e6e3089edc0>)])]>

What customers are saying

Delivery Hero is an online food-ordering service based in Germany that operates in around 70 countries, and uses GKE to run their core service offering. Delivery Hero has been using the GKE custom compute class API extensively, and is pleased with the offering.

“The new custom compute class feature allows us to provide our internal platform customers with clusters that can accommodate their individual needs with ease, confidently scaling on demand. With the priority-based rules, we have a much more fine-grained control to serve our customers their preferred instance family for their workloads, without having to manage node pools ourselves thanks to its integration with node auto-provisioning. It also allows us to build cost-efficient systems, by utilizing spot instances automatically where it makes sense. We don’t need to worry about instance availability anymore, thanks to the fallback rules kicking in if some instance type is at low capacity. And if our preferences cannot be met at some point in time, the active migration feature has us covered – constantly working to reconcile the nodes in the cluster to match our needs as best as it can.” – Heiko Rothe, (Staff Systems Engineer) & Kevin Nandu, Senior Systems Engineer, Delivery Hero

Watch custom compute classes in action

This video provides an overview of what custom classes are and how to use them in practice:

We’re just getting started

At Google Cloud, we strive to make it easy for you to configure and manage your GKE environment; the declarative compute classes node API is just the latest example, and complements other existing ways to configure your nodes. The features we’re launching today represent a first look at the custom compute class API; stay tuned for more features.