Trends in the data space such as generative AI, distributed storage systems, unstructured data formats, MLOps, and the sheer size of datasets are making it necessary to expand beyond the SQL language to truly analyze and understand your data.

To provide users with more flexibility of coding languages, we announced BigQuery DataFrames at Next ‘23. Currently in preview, this new open source library gives customers the productivity of Python while allowing the BigQuery engine to handle the core processing. Offloading the Python processing to the cloud enables large scale data analysis and provides seamless production deployments along the data to AI journey.

BigQuery DataFrames is a unified Python API on top of BigQuery’s managed storage and BigLake tables. It lets developers discover, describe, and understand BigQuery data by providing a Python compatible interface that can automatically scale to BigQuery sized datasets. BigQuery DataFrames also makes it easy to move into a full production application by automatically creating SQL objects like BigQuery ML inference models and Remote Functions.

This is all done from the new BigQuery DataFrames package which is unified with BigQuery’s user permission model, letting Python developers use their skills and knowledge directly inside BigQuery. A bigframes.DataFrame programming object can be handed off to the Vertex AI SDK and the BigQuery DataFrames Python package is integrated with Google Cloud notebook environments such as BigQuery Studio and Colab Enterprise, as well as partner solutions like Hex, and Deepnote. It can also be installed into any Python environment with a simple ‘pip install BigQuery DataFrames’ command.

Since the large-scale processing happens on the Google Cloud side, a small laptop is enough to get started. BigQuery DataFrames contains two APIs for working with BigQuery — bigframes.pandas and bigframes.ml. In this blog post, we will look at what can be done with these two APIs.

bigframes.pandas

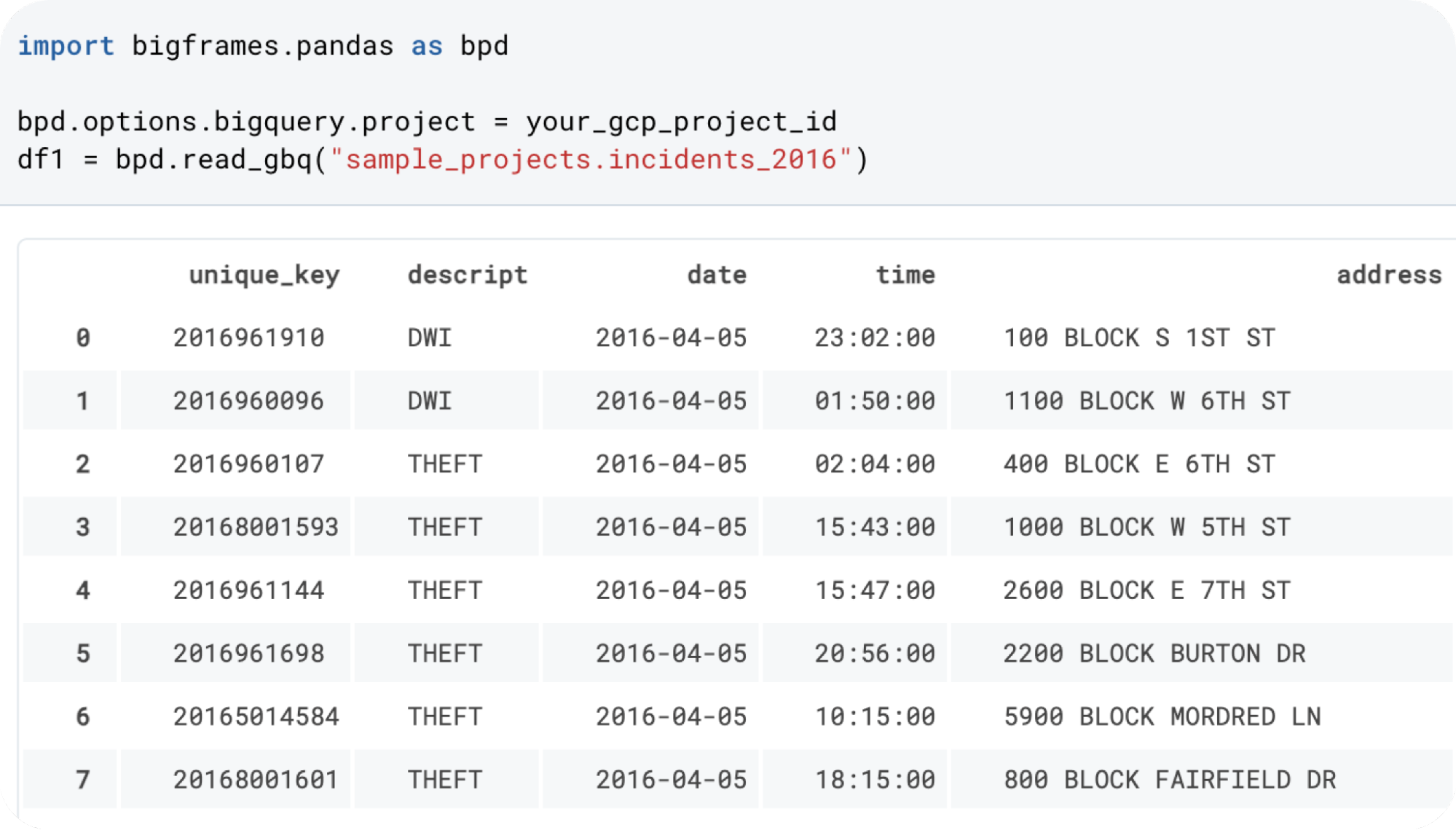

Loosely based on the open source pandas API, the bigframes.pandas API is primarily designed for exploratory data analysis, advanced data manipulation, and data preparation.

The BigQuery DataFrames version of the pandas API provides programming abstractions such as DataFrames and Series that pandas users are familiar with. Additionally, it comes with some distinctions that makes it easier when working with large datasets. The core capabilities of bigframes.pandas today are:

Unified data Input/Output (IO): One of the primary challenges data scientists face is the fragmentation of data across various sources. BigQuery DataFrames addresses this challenge head-on with robust IO methods. Irrespective of whether the data is stored in local files, S3, GCS, or others, it can be seamlessly accessed and incorporated into BigQuery DataFrames. This interoperability not only facilitates ease of access but also effectively breaks down data silos, enabling cohesive data analysis by making disparate data sources interactable within a unified platform.

- code_block

- <ListValue: [StructValue([(‘code’, ‘# Connect a BQ table to a BigQuery table and provide a unique column for #the DatFrame index to keep the data in place on BigQueryrnbq_df = bf.read_gbq(“table”,index=[“unique_column”])rnrnrn# Read a local csv filernlocal_df = bf.read_csv(“my_data.csv”)’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e182eabd040>)])]>

Data manipulation: Traditional workflows often involve using SQL to preprocess large datasets to a manageable size for pandas, at times losing critical data nuances. BigQuery DataFrames fundamentally alters this dynamic. With access to over 200 pandas functions, data scientists can now engage in complex operations, like handling multi-level indexes and ordering, directly within BigQuery using Python.

- code_block

- <ListValue: [StructValue([(‘code’, ‘#Obtain and prepare the datarnbq_df = bf.read_gbq(“bigquery-public-data.ml_datasets.penguins”)rnrnrn# filter down to the data we want to analyzernadelie_data = bq_df[bq_df.species == “Adelie Penguin (Pygoscelis adeliae)”]rnrnrn# drop the columns we don’t care aboutrnadelie_data = adelie_data.drop(columns=[“species”])rnrnrn# drop rows with nulls to get our training datarntraining_data = adelie_data.dropna()rnrnrn# take a peek at the training datarntraining_data.head()’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e183f72dbe0>)])]>

Seamless transitions back to pandas: A developer can use bigframes.pandas for large scale processing and getting to the set of data that they want to work with and then move back to traditional pandas for refined analyses on processed datasets. BigQuery DataFrames allows for a smooth transition back to traditional pandas DataFrames. Whether for advanced statistical methodologies, ML techniques, or data visualization, this interchangeability with pandas ensures that data scientists can operate within an environment they are familiar with.

- code_block

- <ListValue: [StructValue([(‘code’, ‘pandas_df = bq_df.to_pandas()’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e183f72ddc0>)])]>

bigframes.ml

Large-scale ML training: The ML API enhances BigQuery’s ML capabilities by introducing a Python-accessible version of BigQuery ML. It streamlines large-scale generative AI projects, offering an accessible interface reminiscent of scikit-learn. Notably, BigQuery DataFrames also integrates the latest foundation models from Vertex AI. To learn more, check out this blog on applying generative AI with BigQuery DataFrames.

- code_block

- <ListValue: [StructValue([(‘code’, ‘#Train and evaluate a linear regression model using the ML APIrnrnrnfrom bigframes.ml.linear_model import LinearRegressionrnfrom bigframes.ml.pipeline import Pipelinernfrom bigframes.ml.compose import ColumnTransformerrnfrom bigframes.ml.preprocessing import StandardScaler, OneHotEncoderrnrnrnpreprocessing = ColumnTransformer([rn(“onehot”, OneHotEncoder(), [“island”, “species”, “sex”]),rn(“scaler”, StandardScaler(), [“culmen_depth_mm”, “culmen_length_mm”, “flipper_length_mm”]),rn])rnrnrnmodel = LinearRegression(fit_intercept=False)rnrnrnpipeline = Pipeline([rn(‘preproc’, preprocessing),rn(‘linreg’, model)rn])rnrnrn# view the pipelinernpipeline’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e183f72dcd0>)])]>

Scalable Python functions: You can also bring your ML algorithms, business logic, and libraries by deploying remote functions from BigQuery DataFrames. Creating user-developed Python functions at scale has often been a bottleneck in data science workflows. BigQuery DataFrames addresses this with a simple decorator, enabling data scientists to run scalar Python functions at BigQuery’s scale.

- code_block

- <ListValue: [StructValue([(‘code’, ‘@pd.remote_function([int], int, bigquery_connection=bq_connection_name)rndef nth_prime(n):rn prime_numbers = [2,3]rn i=3rn if(0<n<=2):rn return prime_numbers[n-1]rn elif(n>2):rn while (True):rn i+=1rn status = Truern for j in range(2,int(i/2)+1):rn if(i%j==0):rn status = Falsern breakrn if(status==True):rn prime_numbers.append(i)rn if(len(prime_numbers)==n):rn breakrn return prime_numbers[n-1]rn else:rn return -1’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e183f72dfa0>)])]>

A full sample provided here.

Vertex AI integration: Additionally, BigQuery DataFrames can provide a handoff to Vertex AI SDK for advanced modeling. The latest version of the Vertex AI SDK can directly take a bigframes.DataFrame as input without the developer having to worry about how to move or distribute the data.

- code_block

- <ListValue: [StructValue([(‘code’, ‘import vertexairnimport train_test_split as bf_train_test_splitrnrnfrom bigframes.ml.model_selection rnfrom sklearn.linear_model import LogisticRegressionrnrnspecies_categories = {rn ‘versicolor’: 0,rn ‘virginica’: 1,rn ‘setosa’: 2,rn}rndf[‘species’] = df[‘species’].map(species_categories)rnrn# Assign an index column namernindex_col = “index”rndf.index.name = index_colrnrnfeature_columns = df[[‘sepal_length’, ‘sepal_width’, ‘petal_length’, ‘petal_width’]]rnlabel_columns = df[[‘species’]]rnbf_train_X, bf_test_X, bf_train_y, bf_test_y = bf_train_test_split(feature_columns, rn label_columns, test_size=0.2)rnrn# Enable remote mode for remote trainingrnvertexai.preview.init(remote=True)rnrn# Wrap classes to enable Vertex remote executionrnLogisticRegression = vertexai.preview.remote(LogisticRegression)rnrn# Instantiate modelrnmodel = LogisticRegression(warm_start=True)rnrn# Train model on Vertex using BigQuery DataFramesrnmodel.fit(bf_train_X, bf_train_Y)’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e183f72d070>)])]>

Hex integration

Hex’s polyglot support (SQL + Python) provides BigQuery with more ways to work with BigQuery data. Users can authenticate to their BigQuery instance and seamlessly transition between SQL & Python.

Hex is thrilled to be partnering with Google Cloud on their new BigQuery DataFrames functionality! The new support will unlock the ability for our customers to push computations down into their BigQuery warehouse, bypassing usual memory limits in traditional notebooks.

Deepnote integration

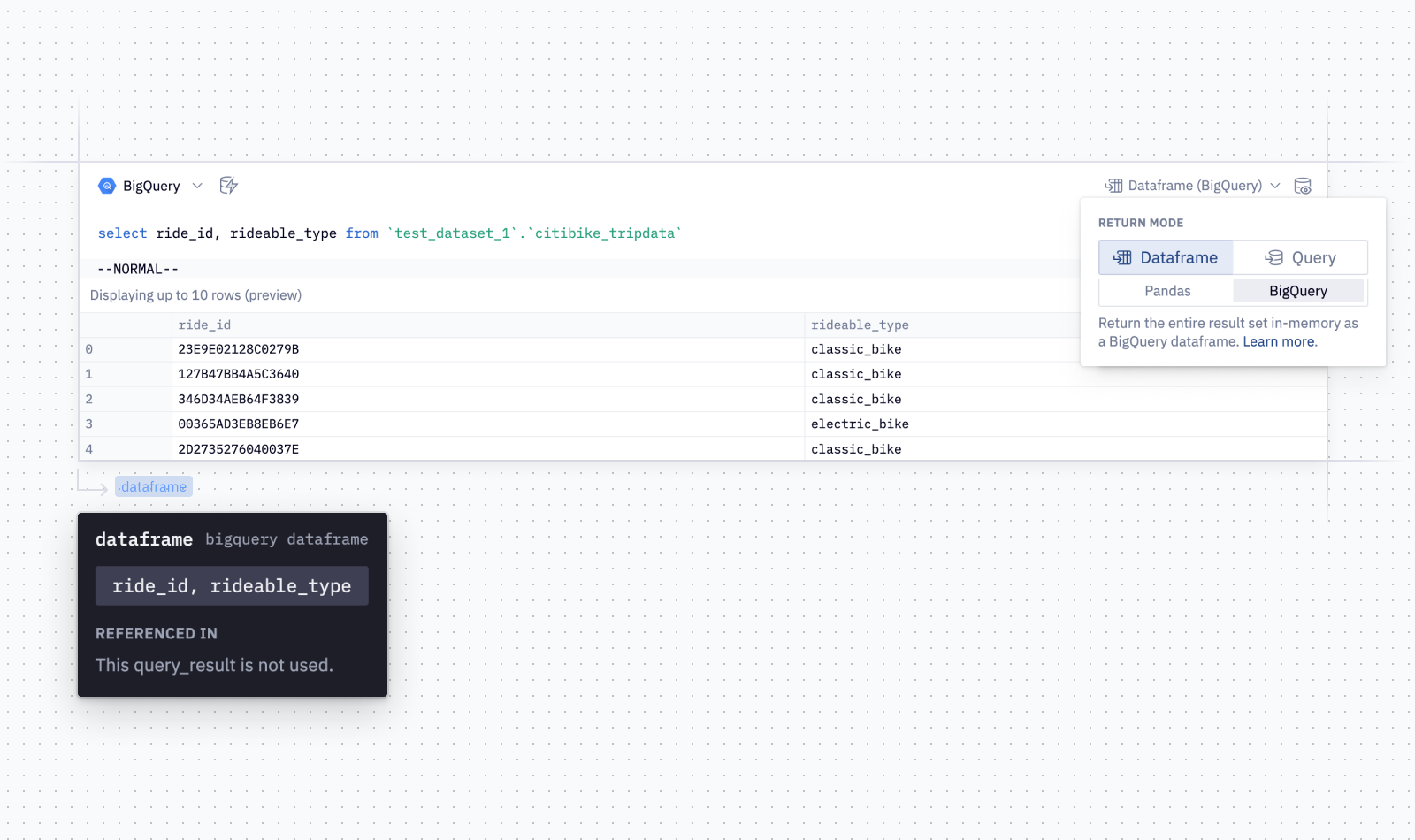

When connected to a Deepnote notebook, you can read, update or delete any data directly with BigQuery SQL queries. The query result can be saved as a dataframe and later analyzed or transformed in Python, or plotted with Deepnote’s visualization cells without writing any code. Learn more about Deepnote’s integration with BigQuery.

“Analyzing data and performing machine learning tasks has never been easier thanks to BigQuery’s new DataFrames. Deepnote customers are able to comfortably access the new Pandas-like API for running analytics with BigQuery DataFrames without having to worry about dataset size.” —Jakub Jurovych, CEO, Deepnote

Getting started

Watch this breakout session from Google Cloud Next ‘23 to learn more and see a demo of BigQuery DataFrames. You can get started by using the BigQuery DataFrames quickstart and sample notebooks.